Translated by: Heart of Metaverse

Recently, the rise of DeepSeek has sparked widespread discussion among Silicon Valley venture capitalists and entrepreneurs. As an emerging force in the field of artificial intelligence, DeepSeek's rapid development has made people begin to rethink the future of AI innovation, the dominance of the open source model, and the sustainability of the traditional AI business model.

The core of this discussion is: Does DeepSeek represent a paradigm shift, or is it just a short-lived shock? How should existing AI companies respond to this change?

01. DeepSeek's innovation and advantages

DeepSeek quickly emerged in the AI developer community, topped the Hugging Face rankings, and became a dominant force in the open source field.

Its design philosophy, centered around speed, cost-effectiveness, and accessibility, has won wide acclaim in the global AI research community. Unlike its competitors, DeepSeek operates at a very low cost, providing cutting-edge AI capabilities without relying on massive infrastructure.

Despite media speculation that the power dynamics in the AI field are shifting, the reality is more complicated: DeepSeek's innovations are prompting existing players to rethink their strategies, driving the industry toward leaner, more efficient AI models.

DeepSeek's success stems from its focus on efficiency and technological creativity. The company excels in code generation and natural language processing with its DeepSeek Coder and DeepSeek-V3 models.

DeepSeek uses reinforcement learning without human intervention to distinguish itself from AI companies that rely on human feedback (RLHF) to learn.

Its R1-Zero model learns entirely through an automated reward system and is able to self-score in math, programming, and logic tasks. This process gives rise to spontaneous "chain of thought reasoning" capabilities, allowing the model to extend reasoning time, re-evaluate assumptions, and dynamically adjust strategies.

Although the initial output was a mixture of multiple languages, DeepSeek successfully developed the DeepSeek R1 model by introducing a small amount of high-quality human-annotated data into the RL process.

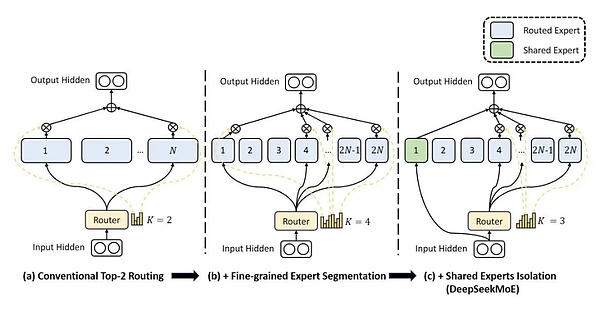

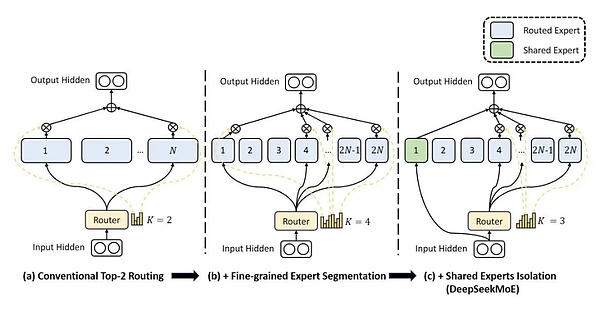

In addition, DeepSeek also uses a "mixture of experts" (MoE) design. The MoE technology allows the model to dynamically select specialized sub-networks (i.e., "experts") to process different parts of the input, thereby significantly improving efficiency.

Unlike traditional overall models, MoE only needs to activate a portion of the expert network, thereby reducing computational costs while maintaining high performance. This approach enables DeepSeek to scale efficiently, providing better accuracy with low power consumption and low latency.

DeepSeek focuses on RL, MOE, and post-training optimization, demonstrating the future of AI computing infrastructure, with optimized memory, network, and computing, which is more refined, faster, and smarter.

02. Challenging traditional proprietary models

Ashu Garg, general partner of Foundation Capital, predicts that scale is no longer the only winning formula in the field of AI. He pointed out that DeepSeek regards AI as a system challenge, and has made comprehensive optimizations from model architecture to hardware utilization.

He also emphasized that the next wave of AI innovation will be led by startups that use large models to design complex "agent systems" that can handle complex tasks rather than just automate simple operations.

In the absence of Nvidia's top H100 GPU, DeepSeek enhanced inter-chip communication by reprogramming 20 processing units on the H800 GPU and used FP8 quantization technology to reduce memory overhead. In addition, they introduced multi-token prediction technology, which enables the model to generate multiple words at a time instead of word by word.

Not only that, DeepSeek's success in the field of open source AI has challenged the traditional proprietary model model. The widespread adoption of its framework shows that AI development is shifting towards a more community-driven direction.

DeepSeek also breaks the inherent idea that "large-scale AI breakthroughs require huge infrastructure investments." By proving that top models can be trained efficiently, it forces industry leaders to rethink whether multi-billion dollar GPU clusters are really needed.

As AI models become more efficient, overall usage is increasing.

DeepSeek’s cost-effectiveness has lowered the barrier to entry and spawned a new wave of startups with streamlined AI architectures. This trend points to a broader shift in the AI ecosystem, where efficiency is becoming a core differentiator, rather than just raw computing power.

In fact, DeepSeek did not create a completely new field, but rather optimized and improved existing AI technology, demonstrating the power of iteration.

This raises the question: Is the first-mover advantage in AI development really sustainable? Perhaps continuous improvement is where true leadership lies.

With advances in speed, reasoning capabilities, and cost-effectiveness, DeepSeek is paving the way for a new era of AI-driven applications.

The industry is on the verge of a wave of AI agents capable of handling complex workflows that will revolutionize industries by increasing efficiency, reducing costs, and enabling new use cases that were previously impossible.

Overall, the rise of DeepSeek signals a shift toward more accessible and cost-effective AI solutions.

As the industry adapts, companies must balance proprietary innovation with open collaboration to ensure the next wave of AI developments remains efficient, adaptable, and scalable. As AI technology continues to advance, the interaction between leading AI companies and emerging players will define the next phase of technological advancement.

Jasper

Jasper