Author: Alex Xu, Mint Ventures

Introduction

So far, this round of crypto bull market cycle is the most boring one in terms of business innovation. The lack of phenomenal hot tracks such as DeFi, NFT, and Gamefi in the previous bull market has led to a lack of industry hot spots in the overall market, and the growth of users, industry investment, and developers is relatively weak.

This is also reflected in the current asset prices. Throughout the cycle, most Alt coins continue to lose blood in exchange rates against BTC, including ETH. After all, the valuation of smart contract platforms is determined by the prosperity of applications. When the development and innovation of applications are lackluster, the valuation of public chains is also difficult to raise.

As a relatively new crypto business category in this round, AI, benefiting from the explosive development speed and continuous hot spots in the external business world, is still likely to bring a good increase in attention to AI track projects in the crypto world.

In the IO . NET report released by the author in April, the necessity of combining AI and Crypto was sorted out. That is, the advantages of crypto-economic solutions in certainty, mobilizing and configuring resources, and trustlessness may be one of the solutions to the three challenges of AI randomness, resource intensity, and difficulty in distinguishing between humans and machines.

In the AI track of the crypto economy, the author tries to discuss and deduce some important issues through another article, including:

· What narratives are budding in the crypto Ai track or will explode in the future

· The catalytic path and logic of these narratives

· Project targets related to narratives

· Risks and uncertainties of narrative deduction

This article is the author's interim thinking as of the time of publication. It may change in the future, and the views are highly subjective. There may also be errors in facts, data, and reasoning logic. Please do not use it as an investment reference. Criticism and discussion from peers are welcome.

The following is the main text.

The next wave of narratives in the crypto AI track

Before officially reviewing the next wave of narratives in the crypto AI track, let’s take a look at the main narratives of the current crypto AI. From the perspective of market capitalization, those with more than 1 billion US dollars are:

Computing power: Render (RNDR, market capitalization of 3.85 billion), Akash (market capitalization of 1.2 billion), IO . NET (the latest round of primary financing valuation of 1 billion)

Algorithm network: Bittensor (TAO, market capitalization of 2.97 billion)

AI agent: Fetchai (FET, market capitalization of 2.1 billion before the merger)

Data time: 2024.5.24, currency unit is US dollars.

In addition to the above fields, which AI track will have the next single project market value exceeding 1 billion?

I think it can be speculated from two perspectives: the narrative of the "industry supply side" and the narrative of the "GPT moment".

The first perspective of AI narrative: From the industry supply side, look at the energy and data track opportunities behind AI

From the industry supply side, the four driving forces for the development of AI are:

· Algorithms: High-quality algorithms can perform training and reasoning tasks more efficiently

· Computing power: Both model training and model reasoning require GPU hardware to provide computing power, which is also the main industry bottleneck at present. The shortage of chips in the industry has led to high prices for mid-to-high-end chips

· Energy: The data computing center required by AI will generate a lot of energy consumption. In addition to the power required for the GPU itself to perform computing tasks, a lot of energy is also required to process GPU heat dissipation. The cooling system of a large data center accounts for about 40% of the total energy consumption

· Data: Improving the performance of large models requires expanding training parameters, which means a huge demand for high-quality data

For the driving forces of the above four industries, there are crypto projects with a circulation market value of more than 1 billion US dollars in the algorithm and computing power tracks, while there are no projects with the same market value in the energy and data tracks.

In fact, the supply shortage of energy and data may soon come, becoming a new wave of industry hotspots, thereby driving a wave of related projects in the encryption field.

Let's talk about energy first.

On February 29, 2024, Musk said at the Bosch Connected World 2024 Conference: "I predicted the chip shortage more than a year ago. The next shortage will be electricity. I think there will not be enough electricity to run all the chips next year."

From the specific data, the Stanford University Artificial Intelligence Institute (Human-Centered Artificial Intelligence) led by Fei-Fei Li releases the "AI Index Report" every year. In the report released by the team in 2022 on the AI industry in 2021, the research team assessed that the energy consumption of AI in that year accounted for only 0.9% of the global electricity demand, and the pressure on energy and the environment was limited. In 2023, the International Energy Agency (IEA) summarized that global data centers consumed about 460 terawatt hours (TWh) of electricity, accounting for 2% of global electricity demand, and predicted that by 2026, the global data center energy consumption will be at least 620 TWh and at most 1,050 TWh.

In fact, the IEA's estimate is still conservative, because there are already a large number of AI-related projects about to be launched, and the corresponding energy demand scale is far beyond its 23-year imagination.

For example, the Stargate project that Microsoft and Open AI are planning. This plan is expected to start in 2028 and be completed around 2030. The project plans to build a supercomputer with millions of dedicated AI chips to provide Open AI with unprecedented computing power to support its research and development in artificial intelligence, especially large language models. The plan is expected to cost more than $100 billion, 100 times more than the current large data center costs.

The energy consumption of the Stargate project alone is as high as 50 terawatt hours.

It is precisely because of this that Sam Altman, the founder of OpenAI, said at the Davos Forum in January this year: "Future artificial intelligence needs energy breakthroughs, because the electricity consumed by artificial intelligence will far exceed people's expectations."

After computing power and energy, the next area of shortage in the rapidly growing AI industry is likely to be data.

In other words, the shortage of high-quality data required by AI has become a reality.

At present, humans have basically figured out the law of the growth of large language model capabilities from the evolution of GPT - that is, by expanding model parameters and training data, the ability of the model can be improved exponentially - and there is no technical bottleneck in this process in the short term.

But the problem is that high-quality and public data may become increasingly scarce in the future, and AI products may face the same supply and demand contradictions in data as chips and energy.

First, disputes over data ownership have increased.

On December 27, 2023, The New York Times formally sued OpenAI and Microsoft in the U.S. Federal District Court, accusing them of using millions of their articles without permission to train the GPT model, and demanding that they "billions of dollars in statutory and actual damages for illegal copying and use of works of unique value" and destroy all models and training data containing copyrighted materials of The New York Times.

At the end of March, The New York Times issued a new statement, targeting not only Open AI, but also Google and Meta. The New York Times statement said that Open AI transcribed the speech parts of a large number of YouTube videos through a speech recognition tool called Whisper, and then generated text as text to train GPT-4. The New York Times said that it is now very common for large companies to use petty theft when training AI models, and said that Google is also doing this. They also convert YouTube video content into text for the training of their own large models, which essentially infringes on the rights of video content creators.

As the "first AI copyright case", the New York Times and Open AI may not reach a conclusion soon, considering the complexity of the case content and the far-reaching impact on the future of content and the AI industry. One of the possible final results is that the two parties settle out of court, and Microsoft and Open AI, who are wealthy, pay a large amount of compensation. But more data copyright friction in the future is bound to raise the comprehensive cost of high-quality data.

In addition, as the world's largest search engine, Google has also revealed that it is considering charging for its search function, but the charging object is not the general public, but AI companies.

Source: Reuters

Google's search engine servers store a large amount of content. It can even be said that Google stores all the content that has appeared on all Internet pages since the 21st century. At present, AI-driven search products, such as perplexity overseas and Kimi and Mita in China, process these searched data through AI and then output them to users. The charging of AI by search engines will inevitably increase the cost of obtaining data.

In fact, in addition to public data, AI giants are also eyeing non-public internal data.

Photobucket is a long-established image and video hosting website that had 70 million users and nearly half of the U.S. online photo market share in the early 2000s. With the rise of social media, the number of Photobucket users has dropped significantly, and there are only 2 million active users left (they have to pay a high fee of US$399 per year). According to the agreement and privacy policy signed by users when registering, accounts that have not been used for more than one year will be recycled, and Photobucket will also support the right to use the pictures and video data uploaded by users. Photobucket CEO Ted Leonard revealed that its 1.3 billion photos and video data are extremely valuable for training generative AI models. He is negotiating with several technology companies to sell the data, with offers ranging from 5 cents to $1 per photo and more than $1 per video. He estimates that the data Photobucket can provide is worth more than $1 billion. EPOCH, a research team focusing on the development trend of artificial intelligence, has published a report on the data required for machine learning, "Will we run out of data? An analysis of the limits of scaling data sets in Machine Learning in g", based on the use of data and the generation of new data in machine learning in 2022, and considering the growth of computing resources. The report concludes that high-quality text data will be exhausted between February 2023 and 2026, and image data will be exhausted between 2030 and 2060. If the efficiency of data utilization cannot be significantly improved or new data sources emerge, the current trend of large-scale machine learning models that rely on massive data sets may slow down.

And judging from the fact that AI giants are buying data at high prices, free high-quality text data has basically been exhausted. EPOCH's prediction two years ago was relatively accurate.

At the same time, solutions to the demand for "AI data shortage" are also emerging, namely: AI data provision services.

Defined.ai is a company that provides customized real high-quality data to AI companies.

Examples of the types of data that Defined.ai can provide: https://www.defined.ai/datasets

Its business model is: AI companies provide Defined.ai with their own data needs. For example, in terms of pictures, the quality requires a certain resolution, avoid blur, overexposure, and true content. In terms of content, AI companies can customize specific themes according to their own training tasks, such as night photos, cones, parking lots, and signboards at night, to improve AI's recognition rate in night scenes. The public can take on tasks, upload them after shooting, and the company will review them. Then the parts that meet the requirements will be settled by the number of pictures. The price is about 1-2 US dollars for a high-quality picture, 5-7 US dollars for a short film of more than 10 seconds, 100-300 US dollars for a high-quality film of more than 10 minutes, and 1 US dollar for a thousand words of text. The person who takes the subcontract task can get about 20% of the fee. Data provision may become another crowdsourcing business after "data labeling".

Global crowdsourcing allocation of tasks, economic incentives, pricing, circulation and privacy protection of data assets, and everyone can participate, it sounds like a business category that is particularly suitable for the Web3 paradigm.

AI narrative targets from the perspective of the industry supply side

The attention caused by the chip shortage has penetrated into the encryption industry, making distributed computing power the hottest and highest-valued AI track category so far.

If the supply and demand contradiction of the AI industry in energy and data breaks out in the next 1-2 years, what narrative-related projects are currently in the crypto industry?

Let's look at energy targets first.

There are very few energy projects that have been launched on the top CEX, and there is only one, Power Ledger (token Powr).

Power Ledger was established in 2017. It is an integrated energy platform based on blockchain technology. It aims to achieve the decentralization of energy trading, promote direct trading of electricity between individuals and communities, support the widespread application of renewable energy, and ensure the transparency and efficiency of transactions through smart contracts. Initially, Power Ledger operated on the alliance chain transformed from Ethereum. In the second half of 2023, Power Ledger updated its white paper and launched its own comprehensive public chain, which was transformed based on Solana's technical framework to facilitate the processing of high-frequency micro-transactions in the distributed energy market. At present, Power Ledger's main businesses include: · Energy trading: allowing users to directly buy and sell electricity, especially electricity from renewable energy, on a peer-to-peer basis. · Environmental product trading: such as the trading of carbon credits and renewable energy certificates, as well as financing based on environmental products. · Public chain operation: attracting application developers to build applications on the Powerledger blockchain, and the transaction fees of the public chain are paid in Powr tokens. · The current market value of the Power Ledger project is $170 million, and the total market value is $320 million. · Compared with energy-related encryption targets, the number of encryption targets in the data track is richer.

The author only lists the data track projects that he is currently paying attention to and have been launched on at least one of the CEXs, Binance, OKX and Coinbase, and arranges them from low to high according to FDV:

1. Streamr – DATA

Streamr’s value proposition is to build a decentralized real-time data network that allows users to trade and share data freely while maintaining full control of their own data. Through its data market, Streamr hopes to enable data producers to sell data streams directly to interested consumers without the need for intermediaries, thereby reducing costs and improving efficiency.

Source: https://streamr.network/hub/projects

In an actual cooperation case, Streamr cooperated with another Web3 vehicle-mounted hardware project DIMO to collect temperature, air pressure and other data through the DIMO hardware sensors mounted on the vehicle to form a weather data stream for transmission to the required agencies.

Compared with other data projects, Streamr focuses more on the data of the Internet of Things and hardware sensors. In addition to the DIMO vehicle-mounted data mentioned above, other projects include real-time traffic data streams in Helsinki. Therefore, Streamr's project token DATA once doubled in a single day in December last year when the Depin concept was the hottest.

Currently, the circulating market value of the Streamr project is $44 million, and the full circulating market value is $58 million.

2. Covalent – CQT

Unlike other data projects, Covalent provides blockchain data. The Covalent network reads data from blockchain nodes through RPC, and then processes and organizes the data to create an efficient query database. In this way, Covalent users can quickly retrieve the information they need without having to perform complex queries directly from blockchain nodes. This type of service is also called "blockchain data indexing."

Covalent's clients are mainly B-side, including Dapp projects, such as various Defi, and many centralized crypto companies, such as Consensys (parent company of Metamask), CoinGecko (well-known crypto asset market station), Rotki (tax tool), Rainbow (crypto wallet), etc. In addition, Fidelity, a giant in the traditional financial industry, and Ernst & Young, one of the Big Four accounting firms, are also Covalent's clients. According to data officially disclosed by Covalent, the project's revenue from data services has exceeded that of The Graph, a leading project in the same field.

Due to the integrity, openness, authenticity and real-time nature of on-chain data, the Web3 industry is expected to become a high-quality data source for segmented AI scenarios and specific "AI small models". As a data provider, Covalent has begun to provide data for various AI scenarios and has launched verifiable structured data specifically for AI.

Source: https://www.covalenthq.com/solutions/decentralized-ai/

For example, it provides data for the on-chain smart trading platform SmartWhales, using AI to identify profitable trading patterns and addresses; Entendre Finance uses Covalent's structured data and AI processing for real-time insights, anomaly detection and predictive analysis.

At present, the main scenarios of the on-chain data services provided by Covalent are still dominated by finance, but with the generalization of Web3 products and data types, the use scenarios of on-chain data will be further expanded.

Currently, the circulating market value of the Covalent project is $150 million, and the total circulating market value is $235 million. Compared with the blockchain data index project The Graph in the same track, it has a relatively obvious valuation advantage.

3. Hivemapper – Honey

Among all data materials, the unit price of video data is often the highest. Hivemapper can provide AI companies with data including video and map information. Hivemapper itself is a decentralized global map project that aims to create a detailed, dynamic and accessible map system through blockchain technology and community contributions. Participants can capture map data through dashcams and add it to the open source Hivemapper data network, and receive rewards based on contributions in the project token HONEY. In order to improve the network effect and reduce interaction costs, Hivemapper is built on Solana.

Hivemapper was first founded in 2015. The original vision was to use drones to create maps, but later found that this model was difficult to expand, so it turned to using dashcams and smartphones to capture geographic data, reducing the cost of global map production.

Compared with street view and map software such as Google map, Hive map per can more efficiently expand map coverage, keep the map real scene fresh, and improve video quality through incentive networks and crowdsourcing models.

Before the outbreak of AI's demand for data, Hivemapper's main customers included the automotive industry's autonomous driving department, navigation service companies, governments, insurance and real estate companies. Today, Hivemapper can provide AI and large models with a wide range of road and environmental data through APIs. Through the input of continuously updated image and road feature data streams, AI and ML models will be able to better convert data into improved capabilities and perform tasks related to geographic location and visual judgment.

Data source: https://hivemapper.com/blog/diversify-ai-computer-vision-models-with-global-road-imagery-map-data/

Currently, the circulating market value of the Hivemapper – Honey project is $120 million, and the full circulating market value is $496 million.

In addition to the above three projects, other projects in the data track include The Graph – GRT (market value of $3.2 billion, FDV of $3.7 billion), whose business is similar to Covalent and also provides blockchain data indexing services; and Ocean Protocol – OCEAN (market value of $670 million, FDV of $1.45 billion, this project is about to merge with Fetch.ai and SingularityNET, and the token will be converted to ASI), an open source protocol that aims to promote the exchange and monetization of data and data-related services, connecting data consumers with data providers, so as to share data while ensuring trust, transparency and traceability.

The second perspective of AI narrative: GPT moment reappears, general artificial intelligence comes

In my opinion, the first year of the "AI track" in the crypto industry is 2023 when GPT shocked the world. The surge in crypto AI projects is more of a "heat aftermath" brought about by the explosive development of the AI industry.

Although the capabilities of GPT4, turbo, etc. have been continuously upgraded after GPT3.5, and Sora has demonstrated its amazing video creation capabilities, including the rapid development of large language models outside of Open AI, it is undeniable that the cognitive impact of AI's technological progress on the public is weakening, and people are gradually beginning to use AI tools, and large-scale job replacement does not seem to have occurred yet.

So, will there be a "GPT moment" in the AI field in the future, and will there be a leapfrog development of AI that shocks the public, making people realize that their lives and work will be changed as a result?

This moment may be the advent of general artificial intelligence (AGI).

AGI refers to machines that have comprehensive cognitive abilities similar to humans and can solve various complex problems, not just specific tasks. AGI systems have highly abstract thinking, extensive background knowledge, common sense reasoning and causal understanding in all fields, and cross-disciplinary transfer learning. AGI's performance is no different from the best humans in each field, and in terms of comprehensive ability, it completely surpasses the best human group.

In fact, whether it is presented in science fiction novels, games, film and television works, or the public's expectations after the rapid popularization of GPT, the public has long anticipated the emergence of AGI that exceeds the level of human cognition. In other words, GPT itself is the pioneer product of AGI and the prophecy of general artificial intelligence.

The reason why GPT has such great industrial energy and psychological impact is that its landing speed and performance exceeded the public's expectations: People did not expect that an artificial intelligence system that can complete the Turing test has really arrived, and at such a fast speed.

In fact, artificial intelligence (AGI) may reproduce the suddenness of the "GPT moment" again in 1-2 years: people have just adapted to the assistance of GPT, and they find that AI is no longer just an assistant. It can even independently complete extremely creative and challenging work, including those problems that have trapped human top scientists for decades.

On April 8 this year, Musk was interviewed by Nicolai Tangen, chief investment officer of the Norwegian Sovereign Wealth Fund, and talked about the time when AGI appeared.

He said: "If AGI is defined as being smarter than the smartest part of humanity, I think it will most likely appear in 2025."

That is, according to his inference, it will take at most one and a half years for AGI to arrive. Of course, he added a prerequisite, which is "if both electricity and hardware can keep up."

The benefits of the arrival of AGI are obvious.

It means that the level of human productivity will take a big step up, and a large number of scientific research problems that have trapped us for decades will be solved. If we define "the smartest part of humanity" as the level of Nobel Prize winners, it means that as long as there is enough energy, computing power, and data, we can have countless tireless "Nobel Prize winners" to tackle the most difficult scientific problems around the clock.

In fact, Nobel Prize winners are not as rare as one in a hundred million. Most of them are at the level of top university professors in terms of ability and intelligence, but because of probability and luck, they chose the right direction, continued to work on it and got the results. People with the same level as him, his equally excellent colleagues, may also have won the Nobel Prize in the parallel universe of scientific research. But unfortunately, there are still not enough people who are top university professors and participate in scientific research breakthroughs, so the speed of "traversing all the correct directions of scientific research" is still very slow.

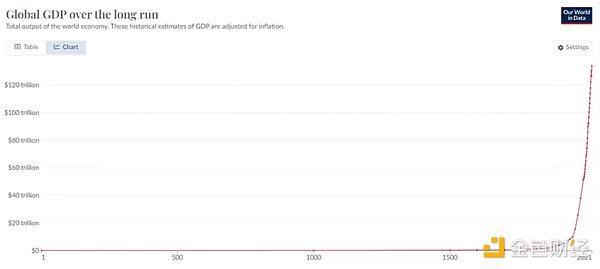

With AGI, with sufficient energy and computing power, we can have unlimited "Nobel Prize winner" level AGI to conduct in-depth exploration in any possible scientific research breakthrough direction, and the speed of technological improvement will be dozens of times faster. The improvement of technology will cause the resources that we now think are quite expensive and scarce to increase hundreds of times in 10 to 20 years, such as food production, new materials, new drugs, high-level education, etc. The cost of obtaining these will also decrease exponentially, allowing us to feed more people with fewer resources, and the per capita wealth will increase rapidly.

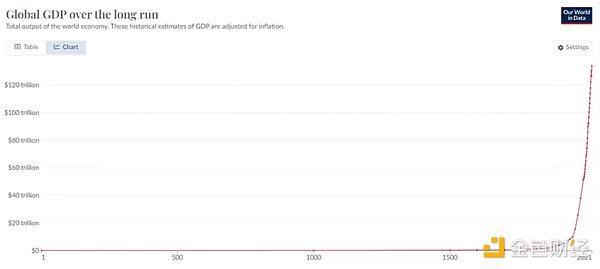

Global GDP total trend chart, data source: World Bank

This may sound a bit sensational, let's look at two examples, which I have used in my previous research report on IO . NET:

· In 2018, Francis Arnold, the Nobel Prize winner in Chemistry, said at the award ceremony: "Today we can read, write and edit any DNA sequence in practical applications, but we can't compose it yet." Just five years after his speech, in 2023, Salesforce Research, an AI startup from Stanford University and Silicon Valley, Researchers published a paper in Nature Biotechnology. They created 1 million new proteins from scratch through a large language model fine-tuned based on GPT3, and found 2 proteins with completely different structures but both bactericidal ability, which are expected to become a bacterial antidote besides antibiotics. In other words: with the help of AI, the bottleneck of protein "creation" has been broken.

· Prior to this, the artificial intelligence AlphaFold algorithm predicted almost all 214 million protein structures on the earth within 18 months. This achievement is hundreds of times the work of all human structural biologists in the past.

The change has already happened, and the advent of AGI will further accelerate this process.

On the other hand, the challenges brought by the advent of AGI are also very huge.

AGI will not only replace a large number of mental workers, but also physical service workers who are now considered "less affected by AI" will be impacted as the maturity of robot technology and the reduction of production costs brought about by the research and development of new materials. The proportion of jobs replaced by machines and software will increase rapidly.

By then, two problems that once seemed very far away will soon surface:

1. Employment and income issues for a large number of unemployed people

2. In a world where AI is everywhere, how to distinguish AI from humans

And Worldcoin \ Worldchain is trying to provide a solution, that is, to provide basic income to the public with a UBI (universal basic income) system, and to distinguish between humans and AI using iris-based biometrics.

In fact, UBI, which gives money to all people, is not a castle in the air without real practice. Countries such as Finland and England have practiced universal basic income, and political parties in Canada, Spain, India and other countries are actively proposing related experiments.

The advantage of UBI distribution based on the model of biometric recognition + blockchain is that this system is global and has a wider coverage of the population. In addition, other business models can be built based on the user network expanded through income distribution, such as financial services (Defi), social networking, task crowdsourcing, etc., to form a synergy of business within the network, which is exactly

One of the corresponding targets of the impact effect brought by the advent of AGI is Worldcoin-WLD, with a circulation market value of $1.03 billion and a full circulation market value of $47.2 billion.

Risks and uncertainties of narrative deduction

This article is different from many project and track research reports previously released by Mint Ventures. The deduction and prediction of narratives are highly subjective. Readers are advised to regard the content of this article only as a divergent discussion rather than a prediction of the future. The above narrative deduction by the author faces many uncertainties, which lead to wrong conjectures. These risks or influencing factors include but are not limited to:

Energy: Rapid decline in energy consumption caused by GPU replacement

Although the energy demand around AI has soared, chip manufacturers represented by NVIDIA are providing higher computing power with lower power consumption through continuous hardware upgrades. For example, in March this year, NVIDIA released a new generation of AI computing card GB200 that integrates two B200 GPUs and one Grace CPU. Its training performance is 4 times that of the previous generation of main AI GPU H100, and its reasoning performance is 7 times that of H100, but the energy consumption required is only 1/4 of H100. Of course, despite this, people's desire for power from AI is far from over. With the decline in unit energy consumption, as AI application scenarios and demand further expand, total energy consumption may actually increase.

Data: Q* plans to achieve "self-generated data"

There has always been a rumored project "Q*" within Open AI, which was mentioned in the internal information sent by Open AI to employees. According to Reuters, citing Open AI insiders, this may be a breakthrough for Open AI in its pursuit of super intelligence/general artificial intelligence (AGI). Q* can not only solve mathematical problems that have never been seen before with its abstract ability, but also create data for training large models by itself without the need for real-world data feeding. If the rumor is true, the bottleneck of AI large model training limited by insufficient high-quality data will be broken.

AGI Coming: OpenAI's Concerns

Whether the time of AGI's arrival is really as Musk said, it will arrive in 2025 is still unknown, but it is only a matter of time. However, as a direct beneficiary of the AGI advent narrative, Worldcoin’s biggest concern may come from OpenAI, after all, it is recognized as the “OpenAI shadow token”.

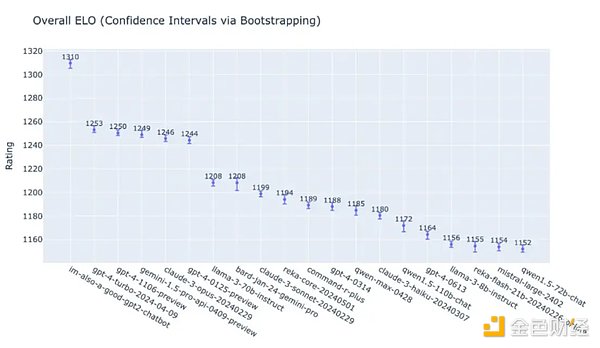

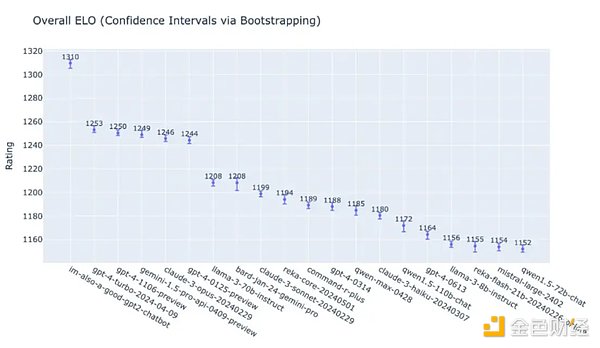

In the early morning of May 14, OpenAI demonstrated the performance of the latest GPT-4o and 19 other different versions of the large language model in the comprehensive task score at the Spring New Product Conference. Judging from the table alone, GPT-4o scored 1310, which visually seems to be much higher than the latter few, but from the total score, it is only 4.5% higher than the second place GPT 4 turbo, 4.9% higher than the fourth place Google Gemini 1.5 Pro, and 5.1% higher than the fifth place Anthropic Claude 3 Opus.

It has been just over a year since GPT3.5 shocked the world when it debuted, and OpenAI's competitors have caught up very close (although GPT5 has not yet been released and is expected to be released this year). Whether OpenAI can maintain its leading position in the industry in the future, the answer seems to be becoming unclear. If OpenAI's leading advantage and dominance are diluted or even surpassed, then the narrative value of Worldcoin as OpenAI's shadow token will also decline.

In addition, in addition to Worldcoin's iris authentication solution, more and more competitors are entering this market. For example, the palm scanning ID project Humanity Protocol has just announced a new round of financing of $30 million with a valuation of $1 billion. LayerZero Labs has also announced that it will run on Humanity and join its validator node network, using ZK proof to authenticate credentials.

Conclusion

Finally, although the author has deduced the subsequent narrative of the AI track, the AI track is different from the encryption native track such as DeFi. It is more of a product of the AI craze spilling over to the currency circle. At present, many projects have not run through in terms of business models. Many projects are more like AI-themed Memes (for example, Rndr is similar to Nvidia's meme, and Worldcoin is similar to Open AI's meme). Readers should be cautious.

JinseFinance

JinseFinance