Editor's Note: Tokens are reshaping the value coordinates of the AI era. Is it the engine of an efficiency revolution, or a hidden reef of uncontrolled costs? This issue will examine the economic logic of the "new oil" of the AI era from the perspective of token cost reduction.

"Iron Shrimp" Fed by Tokens.

Author: Su Yang, Tencent Technology

Recent discussions about tokens have been quite surreal.

Discussions about the Chinese translation of "Token" are ubiquitous on social media—from "Ceographies" and "Intelligence Elements" to even humorous versions like "Wisdom Roots." Tokens aren't a new concept; they've coexisted with neural networks since the first day of large-scale model deployment. However, it wasn't until OpenClaw (commonly known as "Lobster") achieved widespread adoption among users that various agent applications began to bring tokens into the public eye. I believe there are two key issues: its consumption is too high, and its price is too expensive. I remember when OpenAI released GPT-5.4, some users reported consuming $80 worth of tokens just by saying "Hello." At the time, many people said this usage was outrageous, but with the widespread adoption of OpenClaw, burning through tens of millions of tokens in a single task became commonplace. In contrast, Nvidia CEO Jensen Huang, at GTC 2026 and in many subsequent forums, has emphasized the importance of engineers using tokens extensively, even incorporating them into compensation incentives. In one interview, Huang stated, "If an engineer earning $500,000 a year hasn't even used $250,000 worth of tokens, I would be extremely alarmed." The question is, will frantically burning tokens necessarily solve the problem? How many tokens are effective, and what is a reasonable return on investment? Combined with recent foreign media reports, an OpenAI programmer burned through 210 billion tokens in a week, equivalent to 33 Wikipedia entries, but what has this level of consumption ultimately brought? I posted on WeChat Moments, asking if heavy usage like this could get me to P10. A friend immediately commented, "It can help those selling tokens get to P10." Clearly, the effectiveness of this frenzied token burning is questionable, but who the beneficiaries are is certain. Jensen Huang describes Nvidia as the "King of Tokens," possessing the world's most advanced "Token Manufacturing Machine." However, if he relentlessly promotes this, even implying that not using tokens will lead to falling behind, then we can say that: On one hand, Huang wants to fundamentally change the logic of "efficiency assessment" for companies in the AI era; on the other hand, he has indirectly created token anxiety. I. Tokens Are Too Expensive

Not long ago, I asked Zhou Hongyi about the issue of "Tokens being too expensive." He said, "There might be some misunderstanding that people think Tokens are expensive, because the backend of large models can be flexibly configured."

In his understanding, users can choose models to control costs. "The cost of everyday chat conversations is actually very low. What really consumes Tokens are complex tasks, such as generating videos, creating short dramas, or writing novels."

I remember Cheetah Mobile CEO Fu Sheng saying in a video that he optimized the initial daily Token cost of several hundred dollars to the current daily cost of just over ten dollars through some usage techniques. That's 2100 yuan for 30 days, and 25200 yuan for an annual fee.

The question is: how many users can afford a daily cost of ten dollars? Compared to commercial consumer-facing (to C) software currently available on the Chinese internet, such as CapCut, high-end memberships only cost around 600 yuan per year, while entertainment-related memberships are roughly 300 yuan. It's practically impossible to find a consumer-grade software with an annual fee exceeding 25,000 yuan. "Most people still wouldn't accept $10 a day; this will filter out a large number of non-paying users," I told Fu Sheng, who didn't deny my assessment. These days, I've also been trying various types of crayfish products, and the costs involved go far beyond tokens. For example, if users need raw images, a dedicated raw image model API is required; if they want to monitor activity, a paid search API is needed. These potential costs will gradually deter most users. While there might be some open-source workarounds to reduce costs, open-source projects indirectly harbor security risks. On March 13th, during the first episode of Tencent Technology's "Shrimp Talk" livestream series, Lambda, a guest from Xuanwu Lab, shared data showing that his average monthly cost for "raising shrimp" (a metaphor for managing shrimp) exceeded 1,000 yuan. Whether comparing this to the annual fees of consumer-grade tools or feedback from industry "shrimp farmers," the claim that "Tokens are too expensive" based on Agent-based Token consumption is valid. II. Storage Bottlenecks and Efficiency Black Holes Tokens, simply put, are the basic units for information processing in large language models—user input prompts, model outputs answers; every word and every punctuation mark contributes to Token consumption, essentially representing a computational cost. In the past, there were many metrics used to calculate the total cost of ownership (TCO) of computing power, including Flops/W (total FLOPS/W) for energy efficiency and cost/Flops for average calculations. In this year's "Token Economics," Token/W has gradually become a consensus. "The cost of each of our tokens is the lowest in the world," said Jensen Huang at GTC. However, no matter how cheap it is, and regardless of the unit of computing, it is still a quantifiable input cost, involving R&D costs, hardware costs, deployment costs, energy consumption costs, and operating costs. In other words, cost reduction revolves around these aspects. One piece of bad news for token cost reduction is the skyrocketing price of memory. Taking HBM memory as an example, it is a key component supporting large model training and inference. At the same time, the surge in inference data has also triggered a simultaneous increase in storage demand. In the first quarter of 2026, DRAM prices rose by more than 50% quarter-on-quarter, while NAND prices saw a peak increase of 150%. Jensen Huang and Lisa Su have both declared they will take as much HBM as possible, and memory manufacturers like Samsung and Micron have disclosed that they have signed strategic long-term contracts with their top clients for up to five years. The article "100 Days of Memory Price Surge Forces the Death of Budget Phones" mentioned that in the consumer market, inventory of budget phones may have to be discontinued. However, cloud providers are also currently suffering from rising prices due to this issue. The most optimistic industry forecast is that memory prices will fall by 2028, while a more pessimistic forecast is for 2030. As long as memory prices don't fall, token price reductions lack a crucial external lever. Improved model capabilities can also be seen as another lever for price reductions. "Some small 8B models are now approaching the capabilities of full-scale large models," said an academic researcher. In this regard, Mianbi AI, in collaboration with a Tsinghua University team, proposed the concept of Densing Law in *Nature*, emphasizing that the capability density of large models increases exponentially over time, roughly doubling every 3.5 months, while the number of parameters required for equivalent performance halves every 3.5 months. A domestic AI chip industry practitioner also emphasized that good model capabilities and small scale can drive down costs. "Look at the price of domestic open-source large model tokens; they are basically positively correlated with the model size." Several domestic computing power practitioners stated that **improving MFU (Mean Functionality Fusion) also brings room for cost reduction**, in addition to inference optimizations in architecture, memory, and other aspects. "MFU (Mean Functionality Fusion) isn't so much related to the model itself, but mainly to the operators and scheduling strategies," said another domestic in-memory computing chip industry professional. "Currently, the average inference MFU of mainstream large models is around 30%, and after optimization, it can exceed 50%, which is estimated to save 50% of the cost." In other words, the industry hasn't fully utilized GPU performance—spending 100% of the GPU cost, now only using less than a third of the computing power. However, while MFU improvement can lead to a decrease in the cost per token, whether this will be passed on to end consumers depends on the commercial considerations of large model providers. If used to wage a price war, it is undoubtedly an effective lever. III. Another Price War Price wars in China's large models are not without precedent. In 2024, a fierce price war erupted among domestic vendors. This coincided with the launch of DeepSeek-V2, where every million tokens input cost 1 yuan and output 2 yuan, a price equivalent to one percent of GPT-4-Turbo. The key to DeepSeek's price reduction at that time lay in inference optimization—the MoE sparse architecture significantly reduced computational load, and MLA multi-head potential attention compressed the KV cache by over 90%. Following DeepSeek's price cuts, Alibaba, ByteDance, and others subsequently entered the fray, leading to a period where "tokens were free." At a conference years ago, Wang Xiaochuan discussed price wars, arguing that they were fundamentally different from previous group-buying and ride-hailing wars. He stated, "This price war is a direct supply of productivity; it's a price war in the B2B market." At the time, Wang Xiaochuan also emphasized that even if large companies incurred losses in the short term, they could potentially achieve profitability within a year. "With improved reasoning efficiency, subsidies led to a very significant increase in users," said an insider from a large model company that participated in the previous price war. "It probably cost several hundred million yuan." However, this round of token consumption, with simultaneous surges in demand from both B2B and B2C, actually possesses the conditions to change production relations, similar to the group-buying and ride-hailing wars, yet the market has shown surprising silence. The aforementioned insider from a major model involved in the price war believes that once the model's specific capabilities mature and a stable user base is established, there may be little incentive for companies to engage in price wars again. "Token consumption isn't on the same scale as in 2024. In this situation, to wage a price war, the ARR revenue of existing users will be forced to bleed," said the aforementioned domestic AI chip industry professional. "It's unnecessary. The incremental growth brought by a price war is uncertain. Cutting the existing user base first is a bad business strategy." According to Artificial Analysis's tracking data, the API unit price of domestic models is already cheap enough, but this cheapness is far from sufficient for the massive consumption by agents. As mentioned earlier, impacted by the hardware costs of memory and storage, domestic cloud providers are currently facing the challenge of price increases, with little possibility of price reductions in the short term. "This is a continuation of the price wars of the past two years, with domestic manufacturers having a clear price advantage over North American companies. However, everyone understands that acquiring users is a protracted battle, not something that can be resolved in one or two price wars," added the aforementioned domestic in-memory computing chip industry professional. IV. "Welding" the Model to the Chip To address the cost issues caused by the rampant consumption of tokens, some users have begun to try deploying models locally. To date, many users have configured local models for "Xiaolongxia" on Mac Mini. However, this solution will continuously drive up the cost of token usage in the short term, and local deployment itself has a high barrier to entry, while the capabilities of open-source models may not meet user needs. For entry-level users, some manufacturers are attempting to launch EdgeClaw hardware, adding a security narrative to the hardware business. This is a worthwhile direction to explore, but its timing seems ill-timed given the rising memory prices. Previously, a Mini PC entrepreneur stated that price increases impact the entire industry. "Previously, users thought 'it's too expensive,' but now they simply don't even look at it; they don't care how much memory or hard drive space you have," the entrepreneur said. Meanwhile, some brands are also launching barebone systems (without memory or storage) on e-commerce platforms, with prices starting below 2000 yuan. While these lack a "security narrative," they represent the first hurdle that startups like EdgeClaw must overcome. For edge AI hardware like the "crayfish" (referring to a specific product or service), the biggest challenge is still the Mac Mini. Apple's supply chain power and profit margins can support the Mac Mini's extremely high cost-performance ratio, making it difficult for startups to tell a compelling story. Remember the "all-in-one" PCs that DeepSeek was all the rage in early 2025? Do you see their stories still existing in the industry today? Besides integrated hardware solutions like all-in-one PCs, **some startups are also trying to innovate from the underlying chip level.** In February, the Taalas team launched a brand-new chip, the HC1. Based on the TSMC N6 process, this chip has a die size of 815mm², a transistor density of only 53B, and can run the Llama 3.1 8B model on a single chip. Most importantly, it boasts a single-user TPS (Token/s) output of 16960/s, an exceptionally high figure, thanks to the HC1's design. The Taalas team used Mask ROM to hard-encode and solidify the Llama 3.1 8B model weights onto the silicon die. The interconnects in the chip's metal layers act like neural network connections, essentially "soldering" the model onto the chip. This achieves physical integration of computation and storage, completely eliminating HBM/DRAM data transfer and breaking the memory wall limitation. While its TPS performance is outstanding, its weakness also stems from the fact that the model is "soldered" onto the chip. This means that it can only run a fixed version of a fixed model; the weights cannot be changed, the structure cannot be altered, and changing the model requires a complete re-fabrication. You can also understand this as dedicated chip for a specific purpose. V. In Conclusion All discussions are based on the cost of token usage—the high cost is not the unit price, but the exponential increase in token usage due to heavy tasks. I once tried using crayfish to generate GIFs with a specific timestamp. During a conversation with a colleague, he said, "My colleague makes these GIFs; they take half a minute each, by hand." While this example isn't very typical, spending several yuan to make just a few GIFs is clearly not economical.

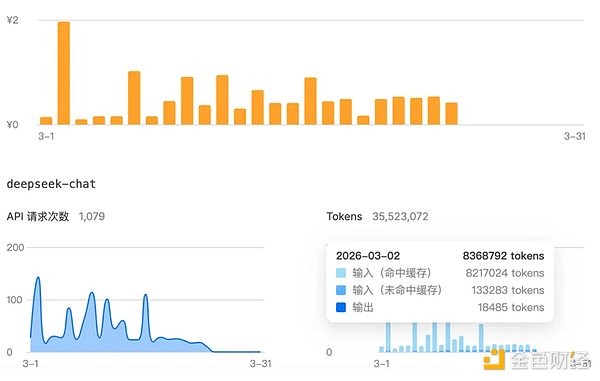

The cost of creating GIFs using the DeepSeek API

To change this, either cheaper token pricing or minimizing token consumption is needed. This depends on model-level optimization and innovation in inference hardware.

To change this, either cheaper token pricing or minimizing token consumption is required. This depends on model-level optimization and innovation in inference hardware.

However, regardless of the circumstances, with the total cost of token usage failing to decrease and the effective return on investment unclear, the frantic promotion of token consumption, even emphasizing its link to performance, is arguably creating not only token anxiety but also AI anxiety. Looking further back, Huang (Zhou Hongyi) even urged tech industry leaders to speak cautiously to avoid triggering irrational public panic about artificial intelligence technology. This is akin to telling the entire industry: stop suppressing AI and creating panic; you should all burn through tokens. But the question remains: who will solve the price problem? Will it be the long-awaited DeepSeek V4? I remember in 2017, there was a viral article titled "The People Miss Zhou Hongyi." Now, the people probably miss the token price war and DeepSeek. At least for the "shrimp farmers," this is most likely the case.

JinseFinance

JinseFinance

JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance JinseFinance

JinseFinance