Text: Ren Zeping Team

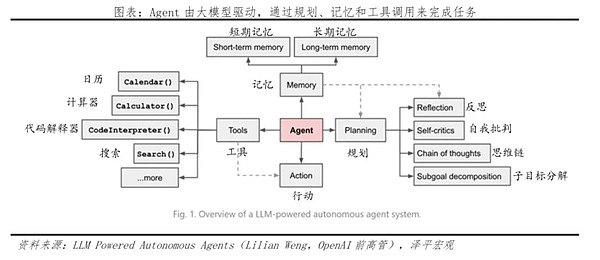

Bill Gates once asserted that "Agents will be the biggest revolution in the history of future computer interaction."If generative AI such as ChatGPT is a learned strategist, then AI Agents will be the most powerful executors. It is no longer just an AI chat box, but possesses "digital hands and feet," capable of directly controlling apps and browsers, mice and keyboards, completing complex tasks for you with a single click—purchasing, booking tickets, expense reimbursement… As NVIDIA's Jensen Huang said,We are moving beyond generative AI and into a new era of AI Agents. ... The core of this revolution lies in action. Agents are no longer limited to generating text; instead, through "brain planning + tool invocation + memory experience," they take over the cumbersome processes of the digital world. You only need to define your goal, and the AI Agent will automatically break down the steps, penetrate various applications, and help you get things done. Whether it's OpenAI's Operator, Google's Jarvis taking over Chrome, or Microsoft's Windows 365 for Agents, major companies are vying for dominance in the super gateway market. Rumors of Manus's high-profile acquisition of Meta have further fueled this Agent arms race. However, for Agents to become part of the new infrastructure, the issue of standardization must be addressed. The MCP protocol emerged to address this need. As the "Type-C interface" of the AI era, it allows large models to be used as easily as a USB drive; coupled with Google's A2A protocol, an interconnected AI Agent alliance is forming in the silicon-based world. However, the biggest obstacle to its implementation is not only technology, but also the restructuring of interests. The ecosystem encirclement encountered by ByteDance's Doubao phone is a manifestation of the conflict of interests between AI Agents and Apps. This is a battle for traffic, data, and gateway sovereignty in the AI era. In the future, AI Agents will reshape the world of traffic, and many business models of the past Internet era will be rewritten. 1. What is an AI Agent: How will it change future life? First, we need to understand what an AI Agent is. Simply put, if ChatGPT and Deepseek were AI strategists, responsible for giving you advice and communicating with you, then the AI Agent is the executor. It not only has a brain but also "hands" and "feet," truly using automated AI capabilities to get things done for you. Just how powerful is the AI Agent? Look at these examples that are happening right now: For instance, Alibaba's Tongyi Qianwen AI aggregates lifestyle service agents: You simply say to it, "Order me a latte," and it automatically opens Taobao Flash Sale, selects the store, places the order, and can even use your past preferences to decide whether to add sugar. It no longer returns a bunch of text links, but directly delivers a successful order result. The first-generation Doubao phone, launched at the end of 2025, features a system-level agent: Within the Doubao phone, AI has cross-app permissions. If you want to book tickets, send WeChat messages, or check maps, theoretically, you don't need to switch between different apps. You issue a command, and the agent automatically schedules various apps in the background to complete the task for you, breaking down the barriers between apps. Another example is the browser agent; Google's Jarvis can directly take over your Chrome browser. If you want to book a flight, it can automatically open the webpage, search for flights, compare prices, and even fill in passenger information, handling all the tedious web operations for you. If generative AI like ChatGPT and DeepSeek show us the "erudition" of AI, then agent-based AI shows us its "capability." This is a new wave in AI development and a super application that will truly benefit everyone in the future. At the 2025 GTC conference, Jensen Huang proposed his famous four-stage theory of AI: the first stage is "perceptual AI," which enables machines to hear and see; the second stage is "generative AI," which enables machines to write poetry and paint; we are now entering the third stage—"agent AI," which is Agent; and the ultimate goal is "embodied intelligence AI." According to OpenAI's definition, an Agent is a highly independent system that can represent a user in using tools to complete tasks. Its core difference lies in its "action capability," meaning it is no longer just a "brain" that can only chat with you, but has grown "hands and feet." Generative AI generates content, while Agents generate actions. Claude believes that Agents are large models that have learned to use tools, enabling them to dynamically plan processes and independently complete tasks. Bill Gates even asserts that Agents will be the biggest revolution in computer interaction history since Windows, completely changing the data silos caused by apps. AI Agent represents a fundamental leap from "dialogue AI" to "work-oriented AI." The Agent's workflow is divided into three stages: Brain + Planning: It can use thought processes like a human to break down a complex goal, such as "help me plan and book a trip," into a series of steps, such as checking flights, comparing prices, booking hotels, and making itineraries. After completing the task, it can also reflect and self-criticize, completing the cycle of "planning - post-action reflection - optimization." Hands & Feet + Tools: It is no longer limited to generating text but can call upon external tools. For example, it can open a browser to search for the latest information, use a calculator to do calculations, call a code interpreter to run programs, and even directly control your calendar and ticketing system.

Memory + Experience: Agents have long-term memory, which stores information that needs to be persisted across tasks and sessions, such as basic user information, preferences, important past interaction records, and knowledge and experience summarized by the agent from tasks; agents also have short-term memory, which can remember the current task progress. Therefore, they can refer to each other to make the most beneficial choice for the user.

In the future world, when agents take over everything, everyone will have one or even a team of agents.Agents integrate AI into responsible operating systems and software, taking over the cumbersome processes of the digital world. Users no longer need to learn how to use complex software; you only need to tell your agent: "Get this done for me." Three Possible Changes in the Future: The first idea is that apps will move into the background, some apps will disappear, and the business models of apps, such as traffic advertising, will be restructured. In the future, with agents, phone screens may no longer be cluttered with icons. When hailing a ride, you won't need to search for Didi or Uber; simply tell the agent where you want to go and what type of car you want. The agent will instantly activate the interfaces of various ride-hailing apps in the background, automatically completing price comparisons, order placement, and payment. Apps will no longer be the foreground competing for your attention, but will be relegated to the background providing service capabilities. The business models of current apps will also face changes. The second concept is that agents will replace traditional operating systems, and operating systems will be anthropomorphized. The operating system of the future will no longer be cold and impersonal, but an omniscient and omnipotent silicon-based steward. The system will understand everything about you. In the morning, the agent will automatically adjust your alarm clock based on your schedule and traffic conditions, and have the coffee machine prepare it in advance. While you are working, it will monitor what you are writing, automatically retrieve data from the backend database, and help you create charts. The agent can even remember your friends' birthdays and automatically place orders on online flower retailers. People will no longer need to learn how to click on the system; instead, the system will completely serve people, and the agent will guess your intentions. The third concept is the ultimate transformation of the human role. When agents can handle everything with a high success rate, the value of humanity will be redefined. We will no longer need to beautify PowerPoint presentations, nor will we need to compare prices ourselves… The only remaining tasks for humans will be decision-making and aesthetics. Humans will need to tell agents what to do and judge the quality of their results. This is an era of the super-individual: one person, plus a tireless team of agents, will be more productive than a company of the past.

2. Industry Landscape: Manus Sparks a "Catfish Effect," Igniting a Battle for Agent Positioning

In early 2026, the biggest news in the global tech world was Meta's proposed acquisition of Manus for tens of billions of dollars.

In early 2026, the biggest news in the global tech world was Meta's proposed acquisition of Manus for tens of billions of dollars. Why did Zuckerberg buy it? Meta was also anxious. Meta possessed the large model Llama, but lacked a super entry point that could directly reach users and solve complex tasks for them. Manus's general task planning capabilities were precisely the most crucial missing piece in Meta's AI puzzle. This proves that Chinese AI companies have already achieved global competitiveness in product strength and engineering capabilities. The explosive popularity of Manus and Meta's actions signify the start of a battle for dominance in the AI Agent arena: OpenAI launches Operator, a system-level agent. On January 24, 2026, OpenAI officially released Operator. OpenAI's CTO believes that "understanding the world is only the first step; interacting with it is true intelligence." Operator is based on the latest multimodal models and reinforcement learning technology. It can look at a screen like a human, understand webpage structure, click buttons, and fill out forms. It has achieved a 70% success rate in handling complex, multi-step tasks such as booking flights and shopping online. Microsoft launched Windows 365 for Agents. First, it introduced Agent 365, an intelligent agent control platform to help users manage agents. Second, it launched Work IQ, an intelligent layer that remembers user preferences and workflows. This layer can predict user actions and recommend agent applications, and also supports customizing agents based on personal characteristics. Unlike other companies that focus on 2C products, Anthropic focuses on the underlying "Computer Use" capabilities, that is, computer operation capabilities. It positions itself as an infrastructure provider, selling APIs to developers worldwide that "enable AI to operate computers." Many startup agents now rely on Claude's capabilities at their core. Google's Project Jarvis is a super agent that directly takes over the Chrome browser. It can help you complete web page operations—booking tickets, shopping, filling out forms. Furthermore, in the Android ecosystem, Google is embedding Gemini Nano into the Android core. The logic is that as long as Google Chrome and the Android entry point are secured, the necessary channels for the agent era are protected. And then there's Musk's Grok, which may evolve into an agent platform driving the physical world in the future. Musk is currently installing Grok in Tesla cars and Optimus robots. While other agents are still operating your computer, Grok might already be controlling Optimus to pour coffee for you—this is the biggest variable in this agent race. Major domestic companies are also actively entering the agent field. ByteDance is focusing on its platform tool, "Kouzi Space," emphasizing the encapsulation of industry-specific expertise into reusable Agent Skills. Its core objective is to build a skills ecosystem marketplace, allowing developers and businesses to create value. This is somewhat like preparing for a future "AI app store." Furthermore, ByteDance partnered with ZTE to launch the Doubao phone, attempting to implement Agent-based permissions at the mobile operating level, but this was quickly countered by apps like WeChat and Taobao. Alibaba's advantage lies in its vast and mature business and lifestyle services ecosystem. Alibaba's Qianwen App strategy is to position itself as an intelligent dispatch hub, directly calling and connecting backend services such as Taobao e-commerce, local services, payment, and travel through AI. This is the most direct path to demonstrate the value of the Agent in "helping you get things done," but its service scope is deeply tied to the Alibaba ecosystem. Baidu, leveraging its existing advantages in Baidu Cloud and Baidu Wenku, positions its intelligent agent as a "super personal assistant." Its key lies in utilizing GenFlow's memory center and dispatch capabilities, deeply integrating with users' personal data and habits to provide highly personalized services. This approach avoids direct competition with e-commerce and lifestyle services, focusing instead on personal knowledge management and productivity enhancement.

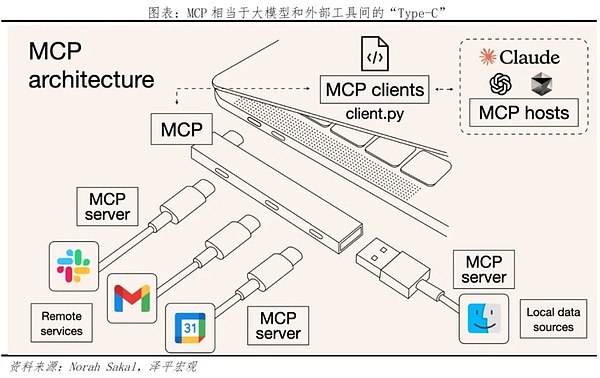

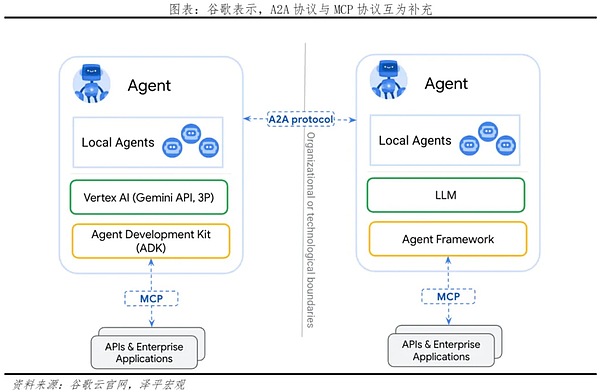

3. Technological Trends: The Standards Battle for AI Agents, MCP and A2A are the Silicon-Based World's "Standardized Language and Tracks"

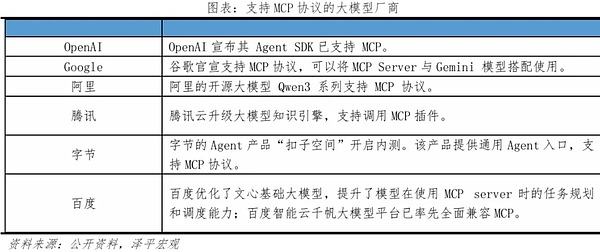

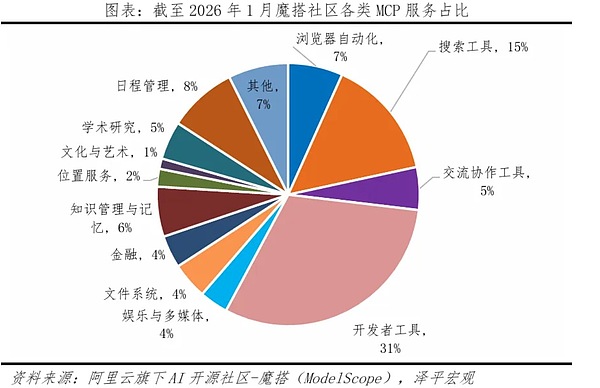

Although AI agents are powerful, if they cannot smoothly call external tools, they are just intelligent but mute.While AI agents are powerful, if they cannot smoothly call external tools, they are just intelligent but mute. In the past, integrating AI into a tool like a calendar or map required developers to write specialized code, a highly inefficient process, like using a key to open a lock. Now, the industry is undergoing a decisive revolution—protocol standardization. This is the "standardization of writing and gauge" for the AI era, a unified system of measurement. The first major technological trend is the MCP protocol. This is the Type-C interface for the AI era, enabling plug-and-play functionality. Before the advent of the Type-C interface, we had to carry several cables when we went out, and even different brands of phone chargers were not interchangeable, resulting in a huge waste of resources. AI development was similar; in the past, each app had a different interface. In late 2024, Anthropic proposed the MCP protocol, or Model Context Protocol, ending the chaos. It's the Type-C interface for the AI world. With MCP, a common language was established between large models and external tools. Developers no longer need to reinvent the wheel for each tool. As long as your calendar, map, payment, etc., support MCP, any large model can be used instantly, like plugging in a USB drive, with sub-second access.

Although initiated by Anthropic, MCP was designed as an open standard. By early 2026, MCP had become an industry-standard connectivity standard..

.

.

.

Snake

Snake

Snake

Snake YouQuan

YouQuan Jasper

Jasper Jasper

Jasper Clement

Clement Kikyo

Kikyo Hui Xin

Hui Xin Aaron

Aaron Jasper

Jasper Alex

Alex